Unlock transparency,

build AI responsibly

Powerful, self-serve AI-risk intelligence

for developers, end-users and regulators.

Next-generation AI-risk monitoring

integrated with multiple technology stacks

WHAT IS AI-RISK?

AI can & does go wrong

Any failure of AI-enabled automation in the regulated enterprise creates operational and compliance liability with novel, dynamic risks for both the data office and the three lines of defence. This necessitates risk monitoring of AI at scale.

AI Controls

Repository

Taxonomy of re-usable artefacts and internal controls libraries to ensure that your automated systems are within your risk appetite, while assuring policy compliance.

AI Risk

Observability

Single pane of glass for data scientists, developers and risk teams to collaborate across the automation value chain, enabling risk transparency and visibility.

AI Outcomes

Interpretability

Integrated explainability to demonstrate machine-learning risk provenance to executive stakeholders and regulators, fostering trust and accountability.

Are you AI ready?

Audit your readiness & discover newer, emerging risks in your AI-enabled product or service.

Sign up for a free AI-risk check list!

ACCELERATE

Turn AI-risk into Opportunity!

Gain 360° visibility and transparency into all your AI-risks with a comprehensive pre-built taxonomy.

Innovate with confidence & trust in your AI.

AI Use-Cases

Unique AI-risks

BENEFITS

See how it all comes together

With Zupervise, you can now analyse risks across multiple layers of AI: models, training data, inputs & outputs.

Step 1 - Analyse

Identify your AI Risk universe

Discover risks in your current business process design. Enable out of the box AI Risk Controls & manage a balance between AI risk appetite and automation experimentation.

Step 2 - Optimise

Unify AI Risk Data

Foster a culture of AI Risk mitigation and make intelligent & informed risk decisions from a single shared system of record. Govern AI Risks originating from the quality of historical data and that of evaluation & benchmark data-sets.

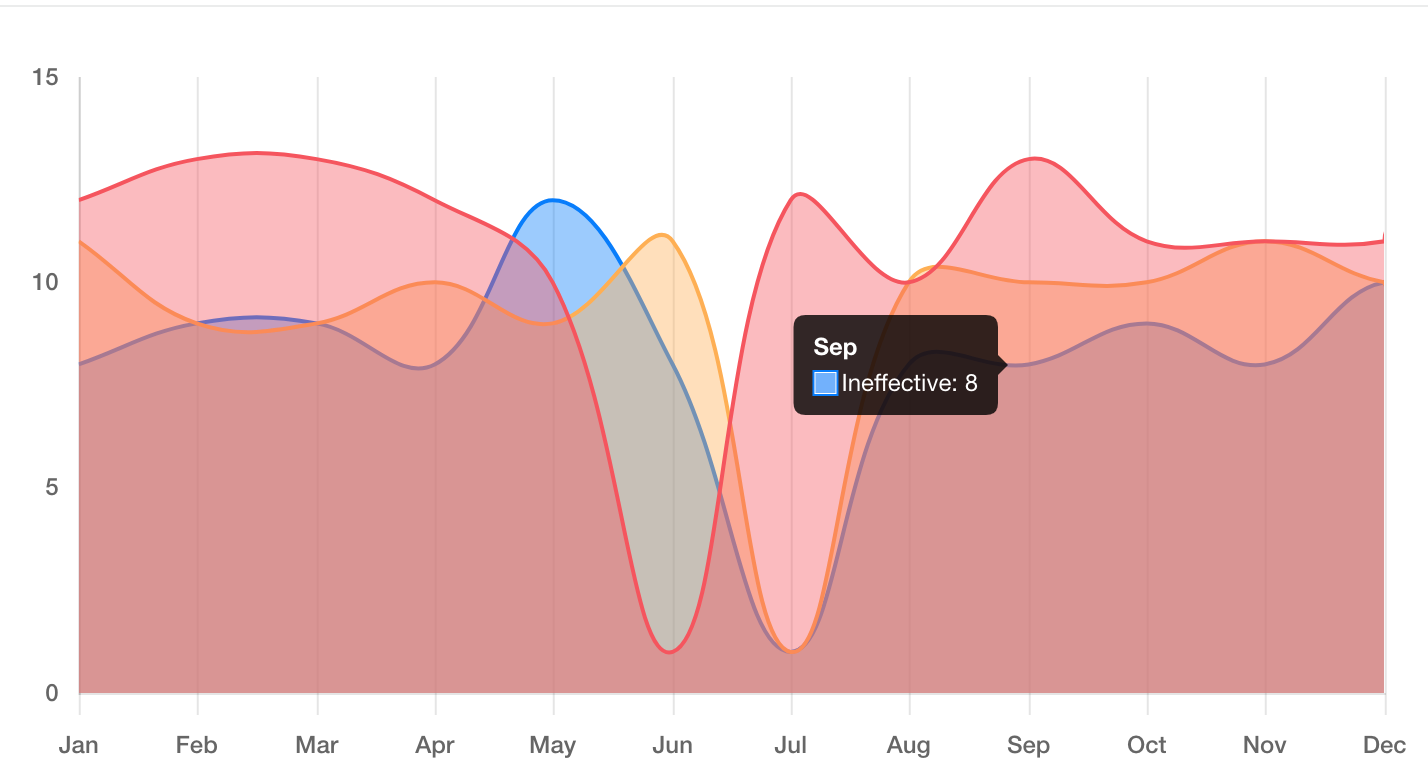

Step 3 - Govern

Gain Visibility into AI Risk Trends

Delineate accountability and make it easier to place trust in your AI investments with data-driven insights into emerging AI Risks. For each AI Risk, monitor multiple signals, including changes in attributes to be able to forecast a material effect on your risk appetite.

OUTCOMES

Streamline

AI-risk

Transparency

Identify AI-Risks

Build your own AI Risk and AI controls taxonomy, or re-use our artefacts, templates and libraries to develop forward-looking internal controls.

Breakdown Governance Silos

Single pane of glass dashboard that has source, risk and operational data integration capabilities to improve transparency in automation deployments & outcomes.

Demonstrate Regulatory Compliance

Articulate algorithmic risk provenance to executive stakeholders and regulators on-demand.

SOLUTIONS

Questions

we help

you answer

Discover diverse

implications of

AI Risks

Regulatory

Will your AI comply with proposed regulatory policies & legislation?

Cyber

Are your AI assets

secured against adversarial attacks?

Privacy

Does your AI process sensitive data for automated decisioning?

Third Party

Can you vet your AI technology vendor's deployments?

Conduct

How do humans in the loop interpret your AI's reasoning provenance?

ESG

Is your AI ethical, responsible and trustworthy?

Let’s do this

Get started with

Zupervise

Book a demo with an AI-risk expert to see Zupervise in action.